If Pilots Train In Flight Simulators, Why Can’t Robots? How To Teach UAV Drones To Avoid Collisions With Video Games

If pilots can train in flight simulators, why can’t robots? People learn from experience and become better at flying and avoiding collisions. Neurala is using its bio-inspired intelligence engine to make better robot pilots – and better collision avoidance systems for drone aircraft (UAVs), driverless cars and telepresence robots – by giving them experience with Microsoft Flight Simulator under a project sponsored by NASA Langley Research Center.

Humans instinctively spot aircraft in the sky. To do this, we don’t scan every inch of the heavens. Our eyes seem to naturally gravitate to an object flying amongst the clouds. Many animals have this instinct, a skill which helps to ensure our survival. We quickly identify potential dangers without wasting time.

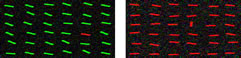

Look at the charts below. It is easy for you to instinctively identify the line that doesn't belong. In one case, the distinguishing factor is color. In another it is position. You did this quickly and without analyzing each line.

Robots are not “born” with this instinct. Traditionally, in the example above, traditional robots would search the entire collection of lines using different parameters.

The same can be said about traditional UAVs. Flying robots scan the entire horizon or regions to identify objects in motion. Often, active sensors, such as radar and laser range-finders, are used to identify objects in motion. However, these active sensors are costly, heavy, and consume battery power.

Neurala’s bio-inspired approach mimics animal brains. That implies that robots can be trained like animals. Instead of providing rules to follow, such as rules to search for motion in the sky, a bio-inspired approach “rewards” the robot for making a proper decision. (The concept is similar to giving a pet a reward to reinforce good behavior.) It lets the robot’s intelligence software apply all of the factors available to it, such as color, gradient, intensity, edges, and, of course, motion. All of these factors can be seen with a low cost, off-the-shelf camera.

The following video shows training using Microsoft Flight Simulator under various weather conditions. The aircraft is quite small, occupying just a few pixels in the screen. Neurala's robotic intelligence software was shown the video for training. When the Neurala intelligence software correctly spotted the distant airplane in the background, the software was given a reward.

[av_video src='http://youtu.be/ntL9R2lEogg' format='4-3' width='16' height='9']

Neurala's software learned from its experience in the flight simulator. Without any programming rules, it was able to quickly identify the sky, ground and clouds in a variety of weather conditions. It learned and remembered what a plane would look like in each of these environments and conditions. Therefore, it wasn’t looking for just one thing, such a motion. Instead, it was looking at the scene and identified something familiar from its experience.

The result is that the plane was spotted with high probability independently of where the UAV flew or what the weather was like. In a sense, this is a fairly similar procedure to the ones human pilots gain via experience in a flight simulator.

Neurala’s patented processing methodology handles multiple inputs at the same time to make decisions by leveraging massively parallel Graphic Processing Units (GPUs), which are commonly found in personal computers and mobile devices. The processing works much in the same way that people do with their massively parallel brains made of billions of neurons and trillions of synapses.

Of course, it is one thing for a pilot to learn in a flight simulator. How would the software work in the real world on a real aircraft?

To see if the approach works in the real world, NASA Langley used the trained Neurala’s robotic intelligence software using video captured by a real drone in flight. The mission was to recognize another actual drone aircraft in the physical world. As you can see in the video below, the robot was able to clearly see the drone and ignore other items that might confuse a traditional robot.

[av_video src='http://youtu.be/rn7zFtGti48' format='4-3' width='16' height='9']

Neurala believes that this bio-inspired approach can be extended to other types of robots to improve accuracy and performance. For example, driverless cars should be able to segment the view and make decisions based on the situation: an approaching overhead sign may need to be read while a sign in the middle of the road needs to be avoided (and read). For a car, a moving airplane in the sky is usually not a risk, but a moving ball on the ground can cause an accident. The bio-inspired approach can help it learn the difference.

So, the next time you see a robot playing a video game, like Microsoft Flight Simulator, it may just be learning what to do before it goes into the real world.